Every product team faces the same tension: bold executive vision versus finite engineering capacity. Sometimes that vision is brilliant and leads to the feature customers didn’t know they needed yet. Other times, it’s a hero feature destined to drain resources while customers wait for what they actually want.

The problem isn’t executive ideas. It’s building them without validation.

Hero features make for compelling narratives in strategy meetings. They differentiate and inspire. But passion isn’t the same as customer demand. And once shipped, features are nearly impossible to remove, even when adoption is low.

The solution isn’t saying no to leadership. It’s using research to figure out which bold bets are worth making. A structured framework that moves from open customer exploration to quantified trade-offs gives you the evidence to prioritize confidently.

The Problem With “Hero Features”

Hero features are the big, bold ideas that get executives excited. They often emerge from competitor envy, conference inspiration, or personal conviction about where the market is heading.

Here’s the thing: sometimes executive intuition is spot-on. Visionary leaders build visionary products.

But, hero features are seductive in ways that cloud judgment. They make for great narratives. They’re the kind of capabilities you announce at company all-hands and get genuine applause. They look impressive in investor decks.

However, this doesn’t mean the market wants it. If you see any of the following patterns, you should pause and consider:

- The feature originated in an executive retreat, not a customer conversation. It came from internal brainstorming or competitive analysis. No customer asked for it.

- The justification is “trust me” or “I know this market.” There’s an appeal to authority rather than evidence. Experience matters, but it’s not a substitute for validation.

- No one can articulate which customer segment is asking for this. Push on who needs it and the answers get vague: “Enterprise customers will love it” or “The market wants it.” These are assumptions, not insights.

- There’s pressure to “just build it” without validation. “We need to ship this by Q3” or “Let’s get it out there and iterate.” The implicit message: research will slow us down.

The Real Cost of Getting It Wrong

Building the wrong feature isn’t just a missed opportunity. It’s expensive in ways that compound over time.

1. Engineering months spent. A mid-sized feature might consume 3-6 months of development time. Multiply that by your engineering team’s loaded cost, and you’re looking at hundreds of thousands of dollars for a feature that might see minimal adoption.

2. UI complexity added. Every new feature adds cognitive load. Menus get longer. Settings multiply. New users get confused. Your product becomes harder to explain and harder to use. Even customers who never touch the hero feature pay a tax in complexity.

3. Support burden increases. New features mean new documentation, new training, new support tickets. Your customer success team must now explain a feature that most customers don’t need.

4. Roadmap backlog for features people want. This is the opportunity cost. While you’re building the hero feature, you’re not building the three things customers keep requesting. You’re not fixing the workflow that causes daily frustration.

5. Nearly impossible to remove once shipped. Even if adoption is low, removing a feature is politically and technically painful. Some customers will be using it. Product Marketing made promises. It’s in your slide deck. And your codebase now has dependencies on it. What was meant to be “ship and iterate” becomes permanent baggage.

The pattern is consistent: excitement in the conference room, ambivalence in the market, regret on the roadmap.

The Research Framework for Feature Prioritization

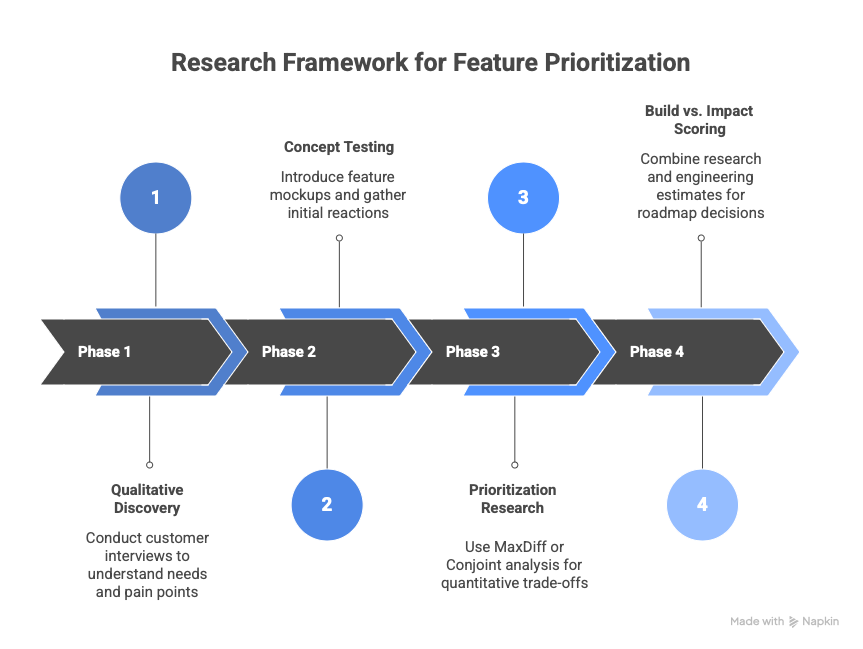

The goal isn’t to prove the hero feature is wrong. It’s to figure out if it’s right. That requires a structured process that moves from open exploration to quantified trade-offs.

Phase 1: Qualitative Discovery (Understanding the “Why”)

Start with customer interviews that don’t mention the feature at all. Explore their everyday world in an open-ended way:

- What are you trying to accomplish in [relevant domain]?

- What’s frustrating about how you do this today?

- Walk me through the last time you [relevant task].

- What workarounds have you built?

You’re listening for unprompted mentions, emotional intensity, and whether the problem the hero feature solves even registers. If customers never bring up the pain point organically, that’s signal.

Key deliverable: Is this even a real problem customers have? And if so, how acute is it?

Phase 2: Concept Testing (Gauging Interest)

Now introduce the feature. Show mockups, descriptions, or prototypes. Make it tangible enough to react to. Ask structured questions:

- Would you use this? How often?

- What value would this provide to you?

- What problem does this solve that you can’t solve today?

- If you had to choose between this and [current top request], which matters more

Watch for the difference between polite interest (“that’s nice”) and genuine excitement (“when can I get this?”). Pay attention to how clearly customers can articulate the value. If they struggle to explain why they’d use it, they probably won’t.

Key deliverable: Appeal scores, anticipated usage frequency, value articulation. Evidence of whether this resonates or falls flat.

Phase 3: Prioritization Research (Quantitative Validation)

This is where you force trade-offs. Use MaxDiff or Conjoint analysis to make customers rank the hero feature against other roadmap items.

The survey presents pairs or sets of features and asks: “Which of these would be most valuable to you? Which least valuable?” Repeat across different combinations until you have a statistically valid priority ranking.

This removes politeness bias. Customers can say everything sounds good in an interview. But when forced to choose, their true priorities emerge.

Key deliverable: An objective priority ranking with statistical confidence. You’ll know if the hero feature ranks #2 or #9 out of 12 potential investments.

Phase 4: Build vs. Impact Scoring

Combine research findings with engineering effort estimates. Create a simple framework:

Feature Score = (Customer Value Score × Addressable Segment Size) ÷ Development Effort

Customer value comes from Phase 3 rankings. Segment size reflects how many customers this applies to. Development effort is engineering’s effort estimate.

Plot everything on a 2×2 matrix:

- High Impact / Low Effort: Build these first

- High Impact / High Effort: Strategic bets, plan carefully

- Low Impact / Low Effort: Quick wins if capacity allows

- Low Impact / High Effort: Probably don’t build

This creates shared language. Product, engineering, and leadership can all look at the same data and understand the trade-offs.

Key deliverable: A prioritized roadmap where decisions are transparent and defensible. The hero feature earns its spot (or doesn’t) based on evidence, not who suggested it.

When Research Says “Actually, Build It”

Here’s the important part: research doesn’t always kill the hero feature. Sometimes it validates executive intuition completely.

For instance: Consider a CEO of a data protection software company who feels strongly that their solution needs AI-powered notifications to alert customers of unusual data storage patterns. His reasoning: their tool was reactive. It collected data on storage behavior, but users had to manually review it and make judgment calls. He saw an opportunity to make customers proactive.

The internal team is skeptical. Engineers historically focused on reporting features instead. Customers weren’t submitting support tickets asking for proactive monitoring. The product roadmap was full of requested improvements to existing dashboards. Why divert resources to something no one was asking for?

This is where the research opportunity comes in. It starts with qualitative interviews to understand how customers monitor their data. Then comes concept testing to collect feedback on a potential AI notification feature.

How do we know if the CEO’s intuition is right? We follow the data. Do customers see the idea and love it? Do they not even know they should have asked for it, but once they see it they ask when it will be available? Can customers understand the value and articulate specific scenarios where it would save them time and catch problems earlier. Does prioritization testing rank in the top tier of potential features.

If so, the CEO’s instinct was right and the entire organization believes it too. Engineers will no longer question its priority seeing that demand is real. Product marketing can craft messaging with confidence because they heard customers articulate the value in their own words. Even sales gets excited because they knew this feature aligns with what customers wanted.

This is why you do the research. Not to prove executives wrong, but to be confident either way. To know you’re building the right thing, regardless of where the idea originated.

Making This Repeatable

One successful research project doesn’t fix the hero feature problem permanently. You need to make evidence-based prioritization a systematic part of how roadmap decisions get made.

Establish Research as Part of the Process. Research can’t be a special circumstance you invoke when you’re nervous about an idea. It needs to be the default. Create a “feature intake” system where every significant roadmap item gets evaluated the same way.

This removes politics. It’s not about who suggested it. It’s about whether customers need it.

Build a Research Repository. Document every decision and its rationale. When a feature gets deprioritized based on research, capture why. When a feature gets built because research validated it, record that too.

This creates institutional memory. Six months later, when someone asks, “Why didn’t we build that analytics dashboard?,” you can point to the research that showed customers ranked it 9th out of 12 priorities. The decision isn’t mysterious. It’s documented.

It also prevents re-litigating the same debates. “We already tested that concept in Q2. Here’s what customers said.”

Celebrate the Wins. Make it visible when research saves the organization from costly mistakes. “Remember when we almost built that collaboration feature? Research showed 12% interest. We built the workflow automation instead, and adoption hit 67% in the first quarter.”

Equally important: celebrate when research validates a bold bet. “The CEO’s AI monitoring idea tested through the roof. We built it, customers love it, and it’s become a differentiation point in sales.”

This reinforces that research isn’t about blocking innovation. It’s about directing resources toward innovation that matters.

Create Psychological Safety. The most important cultural shift: it needs to be okay for anyone’s idea to get deprioritized if the data says so.

That requires leadership modeling the behavior. When an executive’s feature ranks low in research, they need to be the first to say, “Okay, the data is clear. Let’s focus on what customers actually need.” That sets the tone for the entire organization.

When that happens consistently, product teams stop being afraid to suggest research. Because they know it’s not career-limiting to surface data that changes direction. It’s career-building.